The Department of Homeland Security has seen first-hand the opportunities and risks of artificial intelligence. Years later, a human trafficking victim was discovered using an AI tool that conjured up images of children 10 years older.However, investigations have been fooled by AI-generated deepfake images.

Now, the department is becoming the first federal agency to adopt this technology, with plans to incorporate generative AI models across a wide range of departments. Pilot program using chatbots and other tools to combat drug and human trafficking crimes, train immigration officials, and support national emergency management preparedness in partnership with OpenAI, Anthropic, and Meta We are planning to launch.

The rush to deploy as-yet-unproven technologies is to help keep pace with changes brought about by generative AI that can create hyper-realistic images and videos and mimic human speech. It's part of a big competition.

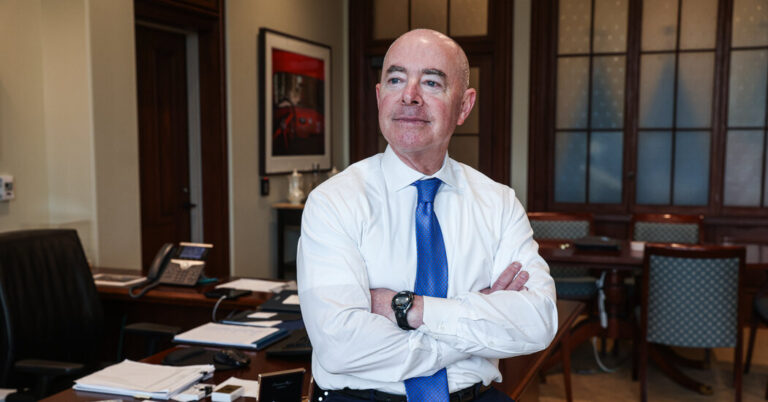

“We can't ignore it,” Homeland Security Secretary Alejandro Mayorkas said in an interview. “And if we are not prepared to recognize and address the potential for good and the potential for harm, it will be too late. That's why we're acting quickly.”

Plans to incorporate generative AI across government agencies are the latest demonstration of how new technologies like OpenAI's ChatGPT are forcing even the most staid industries to reevaluate how they do business. Still, government agencies like DHS are likely to face the most scrutiny over how they use this technology, which has at times been found to be unreliable and discriminatory, making it difficult to use this technology with malicious intent. is causing some debate.

Federal officials are rushing to formulate a plan following President Biden's order late last year mandating the creation of safety standards for AI and its implementation across the federal government.

DHS, which employs 260,000 people, was created after the Sept. 11 terrorist attacks and is tasked with protecting Americans within our borders, including combating human and drug trafficking, protecting critical infrastructure, responding to disasters, and securing the border. ing.

As part of that plan, The agency will deploy 50 AI-based experts to work on solutions to protect the nation's critical infrastructure from AI attacks and combat the use of the technology to produce child sexual abuse material and manufacture biological weapons. We plan to hire experts.

In the $5 million pilot program, the agency will use AI models like ChatGPT to help investigate child abuse materials, human trafficking, and drug trafficking. The company also plans to work with companies to comb through text-based data to find patterns that can help investigators. For example, a detective searching for a suspect driving a blue pickup truck will be able to search for the same type of vehicle across Homeland Security investigations for the first time.

DHS plans to use chatbots to train immigration officials who work with other employees and contractors posing as refugees and asylum seekers. With AI tools, authorities can receive more training with mock interviews. The chatbot also collects information about communities across the country to help create disaster relief plans.

Eric Heisen, the department's chief information officer and head of AI, said the department plans to report on the results of the pilot program by the end of the year.

The agency selected OpenAI, Anthropic, and Meta to experiment with different tools, and plans to use cloud providers Microsoft, Google, and Amazon in the pilot program. “We can’t do this alone,” he said. “We need to work with the private sector to help define what is responsible use of generative AI.”