new! listen to article

The rise of generative AI tools may have leveled the playing field for on-page SEO. But search engines are smart.

Recent updates to algorithms and ranking systems are making user experience (UX) and technical SEO play a more important role than before in search rankings for B2B websites.

Technical SEO interprets your website's content and design elements in a way that search engines can understand. If you don't, your content won't rank, no matter how much value your readers get from it.

In this article, you'll learn basic technical SEO best practices to build a strong online brand, outperform your competitors, and drive qualified traffic to your website.

The role of AI in SEO

Search engines share one goal. It's about providing users with the most relevant and helpful content on search results pages (SERPs) to solve their problems.

There are over 6 billion web pages on the web (and counting). Search engines determine which sites to display through two different processes:

- The first one is crawling. As the name suggests, this process involves a search bot crawling her web to find newly published or updated content.

- Once the site is found, the bot begins the next process called indexing. This process is more complex as the bot analyzes each piece of content based on search ranking factors and signals and its relevance to the search query.

Generative AI tools like ChatGPT have made content creation less difficult. However, these tools don't always produce structured content that can be easily crawled and indexed by search engine bots.

That’s why technical SEO is important for a successful B2B SEO strategy. This allows search bots to easily access and understand the content of his website, index it, and ultimately display it in relevant search results.

Initial website audit

Every successful SEO strategy starts with conducting a comprehensive website audit. This helps identify crawling and indexing issues and their causes.

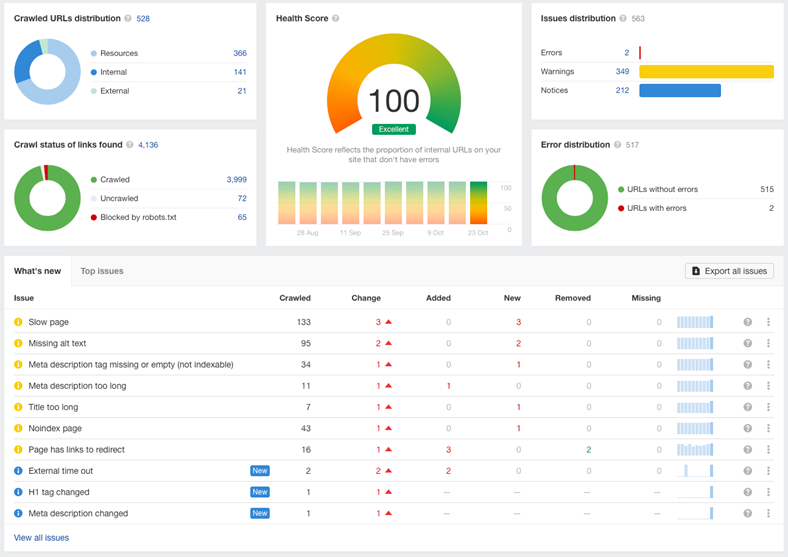

Ahrefs and other similar tools can speed up the process by scanning your website for these issues and compiling them into comprehensive reports such as:

Sample website audit report generated by Ahrefs

For example, the report above shows that some web pages are missing required meta tags. You can then click on each of the missing meta tags to get a list of affected web page URLs and resolve the issue.

Website structure optimization

Search engines care about a website's UX for two reasons.

First, a good website UX makes it easier for website visitors to find what they're looking for, improving average time on page and click-through rate (the percentage of website visitors who click on a link on a web page). and other indicators will be significantly improved.

It also directly impacts the ability of search bots to crawl and index web pages.

In the fifth episode of the Search of the Record podcast, Google developer advocate Martin Splitt explains that search bots have a crawl budget: the number of web pages they can crawl in a given time period.

If your website structure is complex and unorganized, you may not be able to complete the crawl within the allotted time. As a result, some pages of your website will not be crawled, indexed, or included in his SERP for related queries.

To make your site easier and faster to crawl, organize your web pages so that users can find what they're looking for in up to three clicks.

This prevents users from getting frustrated while navigating through your website. It also minimizes the chance that search bots will exceed their crawl budgets and ensures that every page on your site is crawled and indexed.

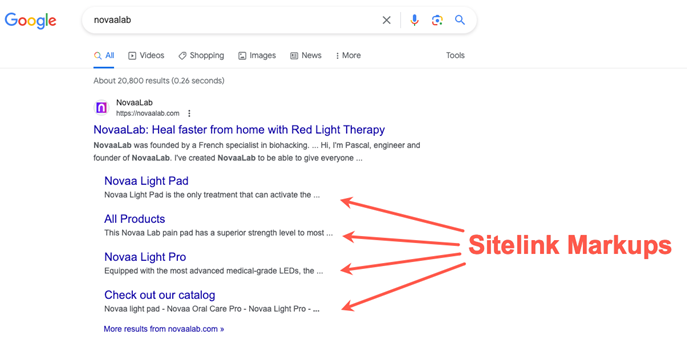

Another way to optimize your site's structure is to implement schema markup (code that translates all the elements in your website in a way that search engines can easily understand). It also provides users with more detailed information about the website.

For example, sitelink schema markup allows our client, Novaalab, to include links to product pages, making them easier to find and access.

Improve website speed and mobile optimization

More than half (55%) of search traffic comes from mobile devices, so optimizing your website with a mobile-first approach is essential.

Page load time is one of the factors that determines a website's UX, especially when viewed on a mobile device.

64% of mobile users expect websites to load within 4 seconds. If it gets any longer than that, they leave.

Various factors can cause slow page load times.

- One is that websites that run JavaScript have too many interactive elements. Web browsers run render-blocking JavaScript before displaying a website. This essentially involves loading any JavaScript files embedded in your website. This will ensure that your website functions as intended when you view it. This process is called render blocking because the browser won't display the website until all embedded JavaScript files have been loaded, slowing down page load times.

- Another reason is that your website is not connected to a content delivery network (CDN), which is a set of networks that contain copies of your website. When someone visits a website, the browser downloads a copy of her website from the closest server based on the site visitor's location, reducing loading time.

Google's PageSpeed Insights tool, along with other tools, can help you identify issues that affect your site's load time on desktop and mobile.

You can then share the report with your website developer or B2B SEO service provider who specializes in technical SEO to resolve these issues.

Leverage XML sitemaps and Robots.txt

XML sitemaps and robot.txt tell search bots which pages you want to crawl and index, and which pages you don't want them to visit. These help you save on your website's crawl budget.

It also supports your lead generation efforts by ensuring things like gated content are not indexed and appear in SERPs.

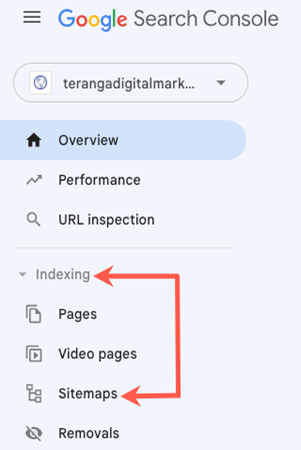

Therefore, it's important to submit an updated XML sitemap to Google every time you make a major update to your website, such as adding a client portal to store all your intake forms and track your services. .

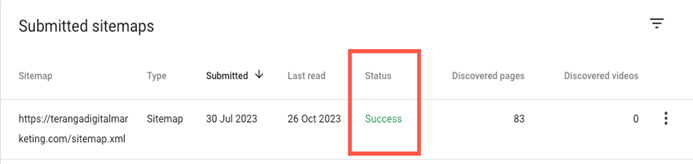

To do this, go to the left sidebar of your website's Google Search Console account.[インデックス作成]is under[サイトマップ]Click.

[新しいサイトマップを追加]Paste the sitemap URL below[送信]Click.

GSC will notify you when Google completes processing your sitemap.

Website performance monitoring and analysis

Applying technical SEO best practices is not a one-time task. This is an ongoing process in which we regularly check your technical SEO metrics so that we can quickly identify and resolve any issues that affect your site's search visibility and rankings.

At least once a month, you should run a site health check to check for broken links, unindexed pages, and pages that search bots can't access. That way, you can make the necessary changes to resolve the issue and improve your website's performance in the SERPs.

Also, make it a habit to stay informed of the latest SEO industry trends and algorithm updates from Google and SEO industry experts. By doing so, you can adjust your SEO strategy and stay ahead of your competitors.

* * *

Even if you have a unique, user-friendly, and responsive website filled with valuable content, it won't help you achieve your business goals if search engines can't find it and share it with users. .

Implementing the suggestions in this article will help search bots better crawl and index your website's important pages, increasing your website's chances of being found and visited by your target audience.

More technical SEO resources

When great content isn't enough: If you want your content to rank, remember technical SEO

Technical SEO checklist

7 technical SEO mistakes that can ruin your search rankings