Getty Images

On Tuesday, Microsoft announced Phi-3-mini, a new lightweight AI language model that is available for free. It is easier to operate and cheaper than traditional large-scale language models (LLMs) like OpenAI's GPT-4 Turbo. Its small size makes it ideal for running locally, bringing AI models to your smartphone with similar functionality to the free version of ChatGPT, without requiring an internet connection to run.

In the AI field, we typically measure the size of an AI language model by the number of parameters. Parameters are numbers in the neural network that determine how the language model processes and produces text. These are learned during training on large datasets and essentially encode the model's knowledge into a quantified form. In general, more parameters allow the model to capture more subtle and complex language production features, but it also requires more computational resources to train and run.

Today's largest language models, such as Google's PaLM 2, have hundreds of billions of parameters. OpenAI's GPT-4 is rumored to have over 1 trillion parameters, but in a mixed expert configuration, 8 of them are distributed over a 220 billion parameter model. Both models require a heavy-duty data center GPU (and supporting system) to work properly.

In contrast, Microsoft went small with Phi-3-mini. Phi-3-mini contains only 3.8 billion parameters and was trained with 3.3 trillion tokens. Therefore, it would ideally run on consumer-grade GPUs or AI acceleration hardware found in smartphones and laptops. This is a follow-up to Microsoft's two previous small-scale language models, Phi-2 released in December and Phi-1 released in June 2023.

Phi-3-mini features a 4,000-token context window, but Microsoft also introduced a 128K-token version called “phi-3-mini-128K.” Microsoft has also created 7 billion and 14 billion parameter versions of Phi-3, which it plans to release later, and claims to be “significantly more capable” than phi-3-mini.

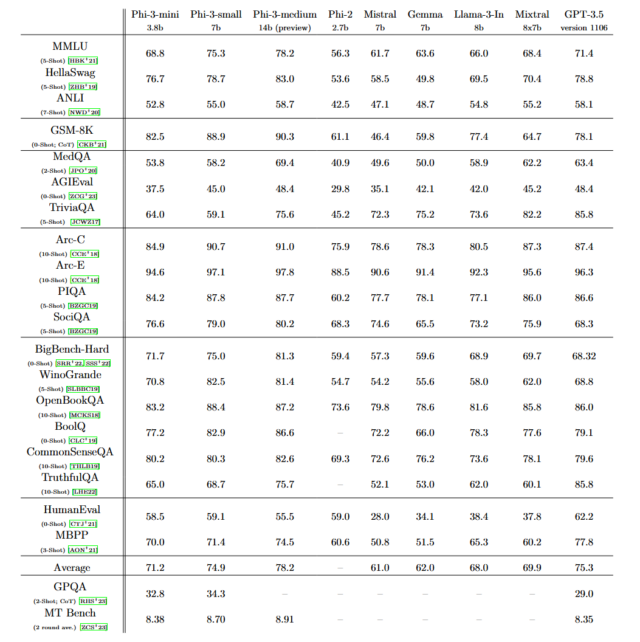

Microsoft says that Phi-3's overall performance is “comparable to models such as Mixtral 8x7B and GPT-3.5,” and details can be found in Phi-3 Technical Report: A Highly Capable Language Model Locally on Your This is detailed in a paper titled “Phone.'' His Mixtral 8x7B from French AI company Mistral utilizes expert mixture models, and GPT-3.5 powers the free version of his ChatGPT.

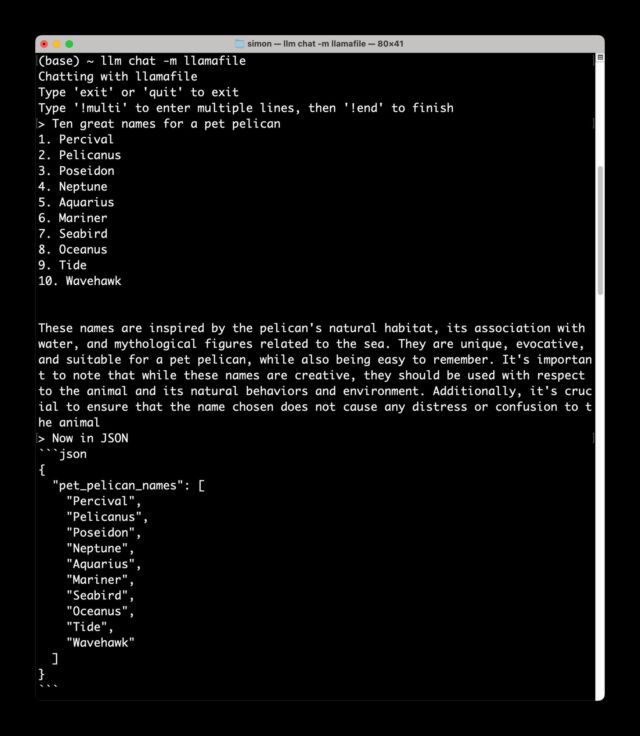

”[Phi-3] “If the benchmarks reflect what it can actually do, it looks like it could be a surprisingly good small model,” AI researcher Simon Willison said in an interview with Ars. Shortly after providing this quote, Willison said in a text message sent to Ars locally, where he had downloaded Phi-3 onto his Macbook laptop, “It worked. Good.”

Simon Willison

”“Most models that run on local devices still require extensive hardware,” Willison says. “The Phi-3-mini runs great with less than 8GB of RAM and can generate large numbers of tokens at reasonable speeds with just a regular CPU. It's MIT licensed and a $55 Raspberry Pi And the quality of results I've seen so far is comparable to a model 4 times larger.”

How did Microsoft incorporate functionality that could potentially be similar to GPT-3.5, which has at least 175 billion parameters, into such a small model? We found the answer using carefully selected, high-quality training data. “The innovation lies entirely in the training dataset, which is a scaled-up version of the dataset used in phi-2, consisting of highly filtered web data and synthetic data,” Microsoft said. I am writing. “This model has also been further tuned for robustness, security, and chat format.”

Much has been written, including by Ars, about the potential environmental impact of AI models and the data centers themselves. New technology and research will allow machine learning experts to continue to enhance the capabilities of small-scale AI models, potentially replacing the need for large-scale AI models, at least in day-to-day work. In theory, this not only saves money in the long run, but also significantly reduces total energy consumption, dramatically reducing the environmental footprint of AI. If benchmark results hold up to scrutiny, AI models like Phi-3 could be a step towards that future.

Phi-3 is available immediately on Microsoft's cloud services platform Azure and through a partnership with machine learning model platform Hugging Face and Ollama, a framework that allows you to run models locally on Mac and PC.