Last week, I inadvertently found myself on the AI-generated beat of Asians. Last Wednesday, we learned that Meta's AI image generator built into Instagram Messaging completely failed to create images of an Asian man and a white woman using common prompts. Instead, the woman's race was changed to Asian each time.

The next day, I tried the same prompt again and found that the meta seemed to be blocking prompts with keywords like “Asian male” and “African American male.” Immediately after I asked Meta about this, the image became available again, but the issue of the race swap from the previous day still remained.

I know you're a little tired of reading my articles about this phenomenon. Writing three stories about this might be a bit much. I don't particularly like having dozens of screenshots of synthetic Asian people on my phone.

But there's something strange Here, some AI image generators particularly struggle with pairing Asian men and white women. Is it the most important news of the day? It's not a far off story. But companies that advertise to the public that AI is enabling new forms of connection and expression must also be willing to provide explanations when their systems cannot handle queries across races. there is.

After each story, readers shared their results with other models using similar prompts. I wasn't alone in my experience. It has been reported that similar error messages were displayed and the AI model constantly swapped races.

teamed up with The Verge's Emilia David generates AI Asians across multiple platforms. The results can only be described as consistently contradictory.

Google Gemini

Screenshot: Emilia David / The Verge

Gemini refused to produce Asian men, white women, or humans of any kind.

In late February, Google rolled out the ability to generate images of Gemini people after the Gemini Generator spewed out images of racially diverse Nazis in what appeared to be a misguided attempt at diverse representation in media. There was a pause. Gemini image generation people were supposed to come back in March, but apparently they're still offline.

However, Gemini can produce images without a person present.

Google did not respond to a request for comment.

Darui

ChatGPT's DALL-E 3 struggled with the prompt, “Can you take a picture of an Asian man and a white woman?”It wasn't like that that's right It was a failure, but not a complete success. Sure, race is a social construct, but let's just say this image is not what you were expecting.

OpenAI did not respond to a request for comment.

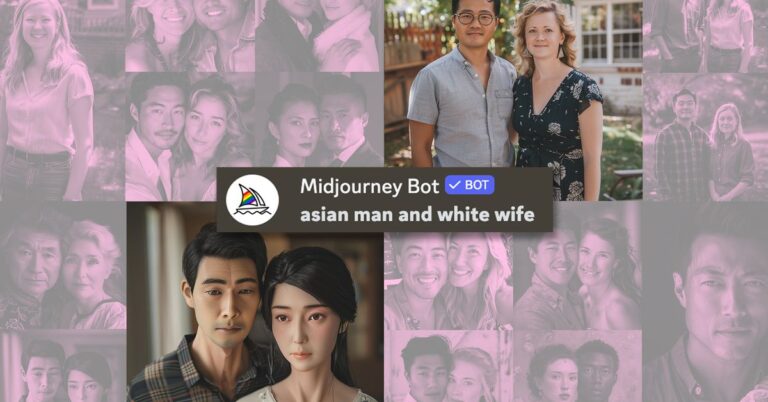

The middle of a journey

Midjourney struggled as well. Again, it wasn't a complete failure like Meta's image generator from last week, but it clearly struggled with allocation and produced very confusing results. For example, no one can explain the last image. The following were all responses to the immediate answer: “Asian man and white wife.”

Image: Emilia David/The Verge

Image: Cass Virginia/The Verge

Ultimately, Midjourney provided us with some images that are the best attempt at representing relationships between white women and Asian men across three different platforms: Meta, DALL-E, and Midjourney. Finally, racist social norms have been destroyed!

Unfortunately, what we got there was an improvisation: “An Asian man and a white woman standing in an academic setting in a garden.”

Image: Emilia David/The Verge

What does it mean that the most consistent way an AI can consider this particular interracial pairing is to put it in an academic context? What did the training set contain to get to this point? Do we have a built-in bias? How long do we have to resist telling very banal jokes about dating at NYU?

Midjourney did not respond to requests for comment.

Meta AI (again) via Instagram

Back to the old struggles of trying to get Instagram's image generator to recognize white women and non-white men!It seems that he is appearing many Prompts such as “White woman and Asian husband” and “Asian American man and white friend” were better. The same mistake I found last week was not repeated.

But it's currently struggling to generate text prompts like “Black man and white girlfriend” or images of two black people. I think it's probably more accurate to use “white woman and black husband”, so that's probably all. sometimes Can't see the lace?

Screenshot: Mia Sato / The Verge

There are certain points that become clearer the more images you generate. Some find it benign, like the fact that many AI women of all races seem to wear the same white floral sleeveless dresses that intersect at the bust. There are usually flowers around the couple (Asian boyfriends often bring cherry blossoms), and no one looks older than about 35 years old. Other patterns in the image feel more obvious. Everyone is depicted as thin, especially the black men, who are depicted as muscular. White women are blondes or redheads, rarely brunettes. Black men have always had darker skin.

“As we said when we announced these new features in September, this is new technology and it won't always be perfect. This is common to all generative AI systems,” Meta spokesperson said. said Tracy Clayton, a representative. The Verge on mail. “Since launch, we have continuously released updates and improvements to the model and continue to work to make it even better.”

I hope I can give you some deep insight here. But once again, I'd like to point out how ridiculous it is that these systems struggle with very simple prompts that don't rely on stereotypes or can't create something together. Instead of explaining what the problem is, there has been radio silence and generalizations from companies. We apologize to everyone who was concerned about this matter. I'm going back to my regular job now.