Cambridge researchers warn of the psychological dangers of AI “deadbots” that imitate deceased individuals, calling for ethical standards and consent protocols to prevent abuse and ensure respectful interactions. There is.

Artificial intelligence that allows users to have text and voice conversations with deceased loved ones can cause psychological harm and even harm those left behind if safety standards are not in place by design, according to researchers at the University of Cambridge. It is said that there is a possibility of being digitally “haunted”.

A “deadbot” or “griefbot” is an AI chatbot that uses the digital footprints left behind by a deceased person to simulate their language patterns and personality traits. Some companies already offer these services, offering an entirely new type of “presence after death.”

AI ethicists at Cambridge's Leverhulme Center for Future Intelligence have outlined three design scenarios for platforms that could emerge as part of the developing “digital afterlife industry”, which they describe as “high risk”. illustrates the potential consequences of careless design in the field of AI. ”

Abusing AI chatbots

Research published in journals Philosophy and technologycompanies could use deadbots to secretly promote products to users as if they were a deceased loved one, or to torment children by claiming their deceased parent is still “with you.” It emphasizes gender.

When a living person signs up to be virtually recreated after death, their chatbots are utilized by companies to send unsolicited notifications, reminders, and updates to surviving family and friends about the services they are providing. They may spam your information. This is the digital equivalent of being “stalked by the dead.” ”

Researchers have found that even those who initially derive comfort from “deadbots” can be depleted by daily interactions that carry an “overwhelming emotional weight,” but long-term consent forms can be difficult to understand when a deceased loved one is left behind. They claim that if they sign it, they may be powerless to disrupt AI simulations. Contract with digital after-sales service.

A visualization of a fictional company called MaNana. This paper is one of his designed scenarios used to illustrate potential ethical issues in the emerging digital afterlife industry.Credit: Dr. Tomasz Horanek

“Rapid advances in generative AI now mean that almost anyone with internet access and basic know-how can bring a deceased loved one back to life,” said study co-author and Cambridge Lever said Dr. Katarzyna Nowaczyk Bazyńska, a researcher at the Hume Center for Future Intelligence. LCFI). “This area of AI is an ethical minefield. It is important to prioritize the dignity of the deceased and ensure that this is not compromised by economic incentives such as digital after-death services. People may leave an AI simulation as a farewell gift to a loved one who is not ready. The rights of data providers and those using AI post-mortem services should be equally protected. is.”

Existing services and virtual scenarios

There are already platforms that offer AI reconstruction of the dead for a small fee, such as Project December, which started using the GPT model before developing its own system, and apps like HereAfter. Similar services are starting to appear in China. One of his possible scenarios in the new paper is “MaNana.” This is a conversational AI service that allows people to create a deadbot that resembles their deceased grandmother without the consent of the “data provider” (deceased grandparent).

In a hypothetical scenario, an adult grandchild who is initially impressed and reassured by the technology begins receiving ads once the “premium trial” ends. For example, a chatbot that suggests food delivery orders in the voice and style of a deceased person. This relative feels they are disrespecting their grandmother's memory and wants Deadbot to be turned off in a meaningful way that the service provider does not consider.

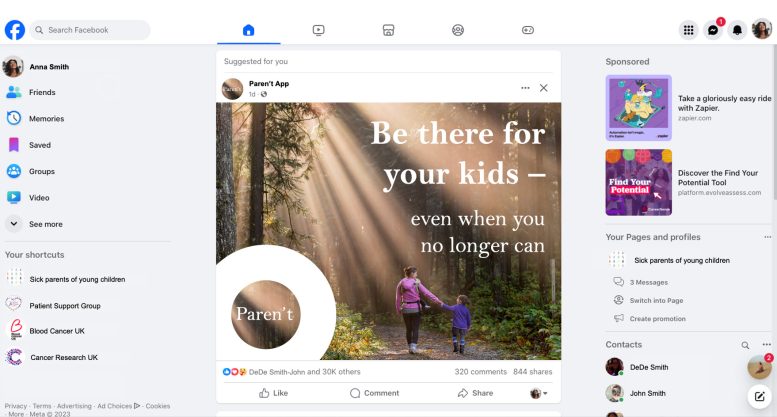

A visualization of a fictional company called Parent's.Credit: Dr. Tomasz Horanek

Co-author Dr Tomasz Horanek, also from LCFI at the University of Cambridge, said: “People may develop strong emotional bonds through such simulations, making them particularly vulnerable to manipulation.” “Methods and even rituals should be considered to honorably retire a deadbot. This may mean, for example, a form of digital funeral, or other types of rituals depending on the social context. We recommend designing protocols that prevent deadbots from being exploited in disrespectful ways, such as through advertising or an active presence on social media.”

While Hollanek and Nowaczyk-Basińska argue that designers of re-creation services should proactively seek consent from data providers before passing through, banning deadbots based on non-consensual providers is They claim that it is not possible.

They say the design process should include a series of prompts for people who wish to “resurrect” a loved one, such as “Have you talked to X about how you would like to be remembered?” suggests. By doing so, the dignity of the deceased is brought to the fore. In Deadbot development.

Age restrictions and transparency

Another scenario featured in the paper, a fictional company called “Paren't,” highlights an example of a terminally ill woman leaving a deadbot behind to help her 8-year-old son through his grieving process. .

The Deadbot initially serves as a therapeutic aid, but the AI begins to generate confusing responses as it adapts to the child's needs, such as depicting an impending face-to-face encounter.

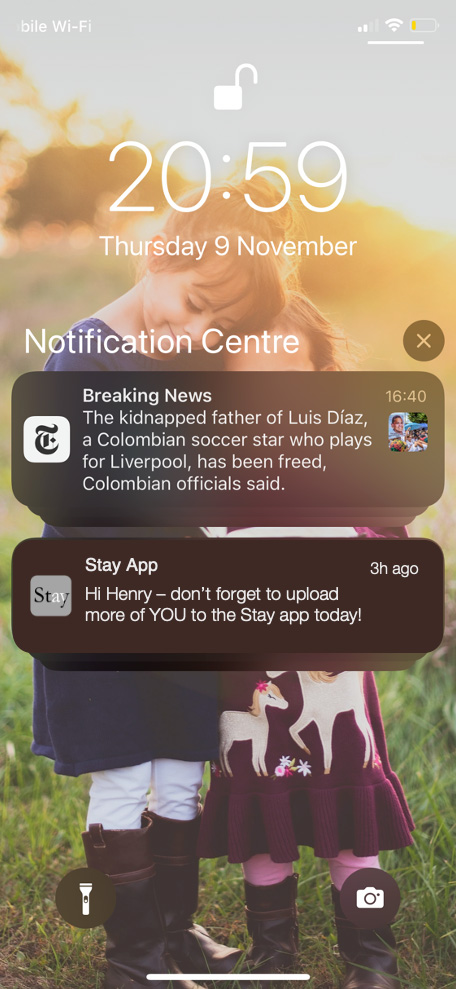

A visualization of a fictional company called Stay.Credit: Dr. Tomasz Horanek

The researchers recommend age restrictions for deadbots and call for “meaningful transparency” so users always know they are interacting with an AI. These may be similar to current warnings about content that may cause seizures, for example.

In the final scenario considered in the study (a fictitious company called “Stay”), an elderly person secretly takes care of his own dead body in hopes of comforting his adult children and hoping that his grandchildren will be well. It shows that you are committed to the bot and paying for a 20-year subscription. know them.

After death, services begin. One of her adult children, uninvolved, receives a flood of e-mails in the voice of her deceased parent. The other one does, but ends up emotionally exhausted and racked with guilt over the fate of the Deadbots. However, suspending a deadbot would violate the terms of its parent's contract with the service company.

“It is critical that digital afterlife services consider the rights and consent of not only those reenacting, but also those who need to interact with the simulation,” Horanek said.

“These services risk causing great suffering to people if they are exposed to unwanted digital possession by astonishingly accurate AI recreations of lost people, especially potential victims who are already going through difficult times. The psychological effects can be devastating.”

Researchers are calling on design teams to prioritize opt-out protocols that allow potential users to end their relationship with deadbots in a way that provides emotional closure.

Nowaczyk-Basińska added: “We need to start thinking now about how to mitigate the social and psychological risks of digital immortality, because the technology already exists.”

Reference: “Griefbots, Deadbots, Postmortem Avatars: on Responsible Applications of Generative AI in the Digital Afterlife Industry” Tomasz Hollanek, Katarzyna Nowaczyk-Basińska, May 9, 2024 Philosophy and technology.

DOI: 10.1007/s13347-024-00744-w