Image credits: Alexander Shatov/Unsplash

Social media service Snap announced Tuesday that it plans to add watermarks to AI-generated images on its platform.

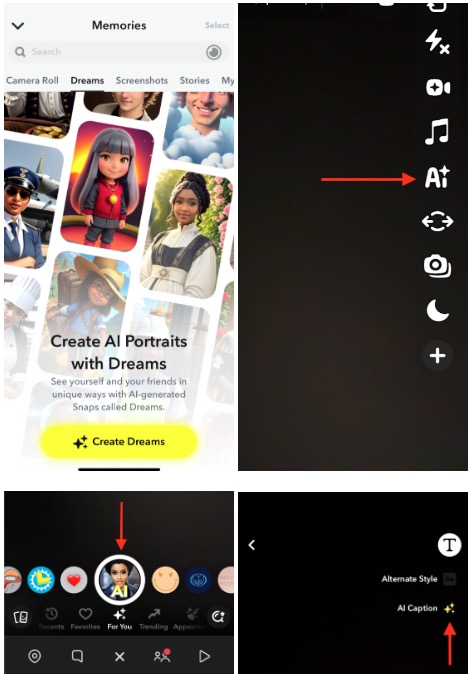

The watermark is a translucent version of the Snap logo with a glitter emoji that is added to AI-generated images exported from the app or saved to your camera roll.

The watermark, a glittering Snap logo, indicates an AI-generated image created using Snap's tools. Image credits: snap

The company said on its support page that removing watermarks from images violates its terms of service. It's unclear how Snap detects the removal of these watermarks. We have reached out to the company for more information and will update this story when we hear back.

Other tech giants like Microsoft, Meta, and Google are also taking steps to label and identify images created with AI-powered tools.

Currently, Snap allows paid subscribers to create or edit AI-generated images using Snap AI. A selfie-focused feature, Dreams lets users add flavor to their photos using AI.

In a blog post outlining its AI safety and transparency efforts, the company explained that it displays AI-powered features like lenses with visual markers that resemble glittery emojis. .

Snaps list metrics for features powered by generative AI. Image credits: snap

The company also said it has added context cards to AI-generated images created with tools like Dream to better inform users.

In February, Snap partnered with HackerOne to introduce a bug bounty program aimed at stress testing its AI image generation tools.

“We want Snapchatters from all walks of life to have fair access and expectations when using all features within our app, especially our AI-powered experiences. With this in mind “We are conducting additional testing to minimize potentially biased AI results,” the company said at the time.

Snapchat's efforts to improve AI safety and moderation are expected to continue as the company's “My AI” chatbot launches in March 2023, allowing some users to respond to the bot by asking for sex, drinking, or other unsafe things. This was done after the video sparked controversy by successfully encouraging conversations about sexual topics. The company then introduced controls in Family Center to allow parents and guardians to monitor and limit their children's interactions with AI.