The steady rise of AI over the past few years, and the accelerated growth of generative AI adoption since OpenAI announced ChatGPT in November 2022, positions Intel as a challenger in the chip market it has long dominated. I've changed it.

Sure, Intel still commands a significant portion of the consumer and enterprise CPU space, but the focus is on AI workloads now and into the future. And AI isn't the only reason Intel can no longer own the industry the way it once did. It didn't help that internal design and manufacturing challenges led to expensive and embarrassing product delays. And trends like cloud computing and the edge paved the way. For both traditional rivals and startups. But more than a decade ago, NVIDIA invested all of its funds into AI, using it as the north star for future innovation.

And it paid off, and as we said in February, the GPU maker is on the verge of becoming a $100 billion company, with sales of $22.1 billion in the fourth quarter of 2024, up 265.3% year over year. Ta. By a combination of hardware and software. Meanwhile, Intel revealed earlier this month that its key foundry business had suffered a $7 billion loss, prompting CEO Pat to review a multi-year effort to move the company in the right direction. It highlights the hurdles Gelsinger faces.

Far from dampening its trademark enthusiasm, Gelsinger spoke in a keynote address at the company's Intel Vision 2024 event in Arizona, praising Intel's commitment to advancing AI everywhere, including the adoption of open systems. He offered a roadmap to further his vision and appeared to relish the role of underdog. The source approach promises silicon that outperforms anything Nvidia offers on the market, delivering a portfolio that addresses AI workloads in the data center and cloud, PC, and edge.

open architecture and intel

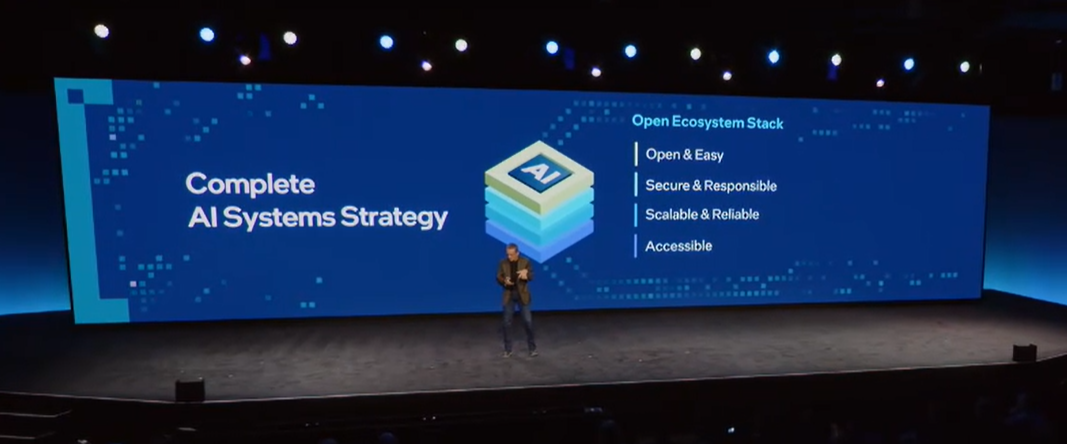

The CEO also said the AI market will evolve in favor of Intel, noting that demand will move toward open platforms and that Intel has strong support from major AI and IT companies, while companies will stick to architectures. He insisted that he would. They've known for decades whether they can deliver the same or better AI performance and efficiency than Nvidia.

It's unclear whether it will play out that way, but Gelsinger said that when he introduced the upcoming latest generation of Xeon server chips (the Xeon 6 family) and Gaudi 3 AI accelerators and talked about his open systems strategy. I had a firm idea of my own.

“One of the key questions our AI customers ask us is, ‘How do I deploy it?’” he said. “And almost all development currently being deployed at GenAI is moving to higher-level environments, the PyTorch framework, and other community models from Hugging Face. The industry is rapidly moving away from proprietary. [Nvidia] CUDA model. With literally just a few lines of code, you can run industry-standard frameworks on power-performance, efficient cloud infrastructure. ”

Inter has a mountain to climb. Gaudi 3 comes after NVIDIA announced Blackwell datacenter GPUs last month, including the GB200 Grace Blackwell Superchip, which combines two B200 GPUs and one Grace CPU linked by next-generation NVLink connectivity. Attesting to Blackwell's progress, Nvidia founder and CEO Jensen Huang said at the company's GTC 2024 show that training a 1.8 trillion parameter AI model requires 8,000 previous I mentioned that I needed a Hopper GPU and 15 megawatts of power. With Blackwell, he only needs 2,000 accelerators and consumes 4 megawatts of power.

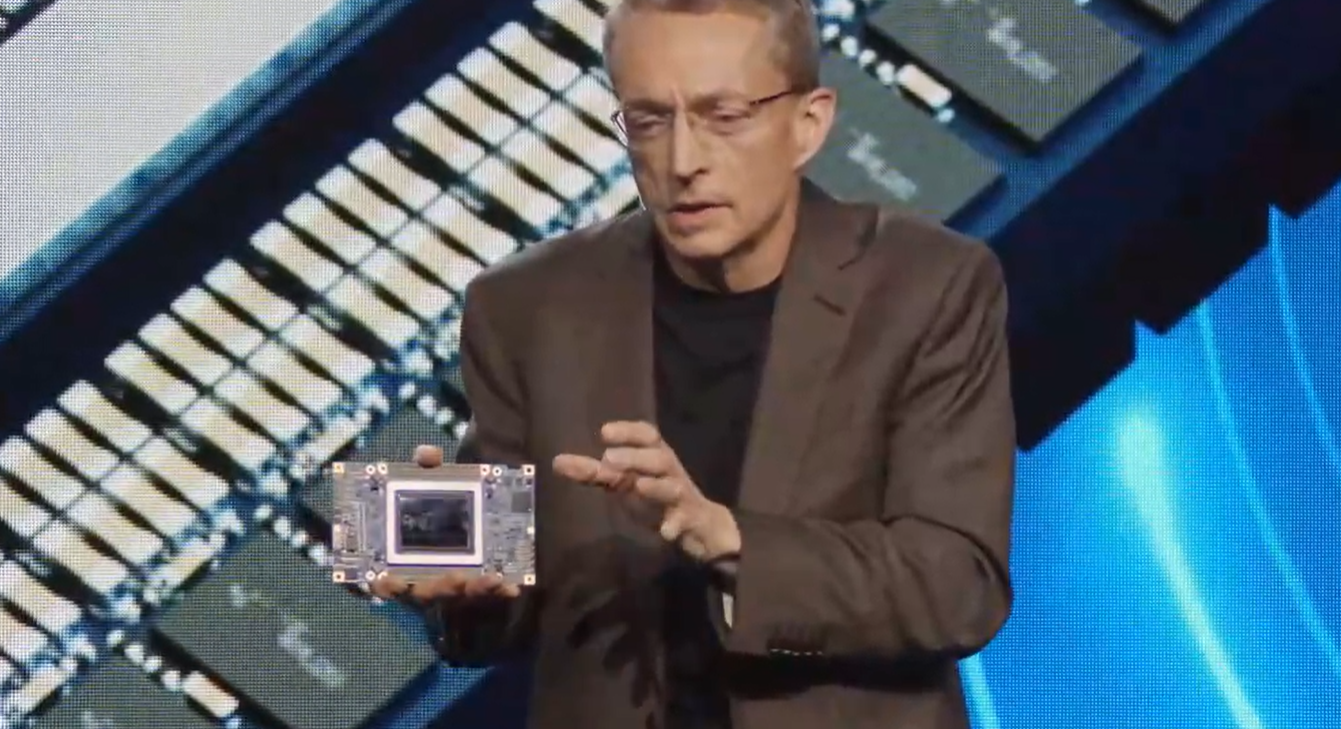

Gaudi 3: Better performance at lower cost

Gelsinger pushed back, touting Gaudi 3's performance and TCO. The accelerator is 50 percent better at inference and 40 percent more power efficient than Nvidia's current accelerators, making the H100 hard to find given the rapidly increasing demand for compute power for AI workloads. in some cases. Fee.

In interviews with journalists and analysts after the keynote, Gelsinger said he was not ready to discuss Gaudi 3's price, but added, “I'm very confident it will be significantly below the price of the H100 or Blackwell.” he added. It's not just a little under, it's a lot under, and that can be a factor in TCO. ”

New Xeon 6 branding represents the latest CPUs for the data center, cloud, and edge. “Sierra Forest” with E-core will be available later this quarter, and “Granite Rapids” and its performance core will be available shortly thereafter. Intel boasts 2.4x higher performance per watt and 2.7x higher rack density compared to 2nd generation Xeon with Sierra Forest.

Once again, Gelsinger positioned the CPU as a more open alternative with performance comparable to Nvidia's high-powered GPUs and CUDA framework. The new HE Xeon supports HE MXFP4 data format. He explained that the MXFP4 data format is a narrow format that enables memory compression, efficient AI training, and AI inference with “minimal loss of accuracy.” With MXFP4, Granite Rapids can reduce next-token latency by up to 6.5x compared to 4.th Xeon generation capable of running 70 billion parameter Llama-2 models using FP16.

love the architecture that is with you

This is attractive to companies that have been using Xeon in their data centers for many years, are accustomed to Intel architecture, and tend to gravitate toward open environments.

“The data, the data stack, and almost all of the data stack runs on Xeon,” he said. “This is where the database runs. Amazingly, we are now at a stage of about 22 years of cloud migration where the vast majority of computing (over 60%) is done in the cloud or in cloud implementations. 66 percent of data is unused and 90 percent of unstructured data is unused.”

Enterprises can leverage this unused on-premises data for AI by employing search augmentation and generation (RAG) techniques that include company information in the data used to train large language models.

“The Xeon is clearly a great machine to run these RAG environments and make LLM on the data more effective and efficient,” said Gelsinger. “Xeon is becoming more than just a database front end, it can also run LLM. You don't need new management, new networking, new security models, new IT knowledge, or proprietary networking. It only works with Xeon, the industry standard that we know and love.”

According to the CEO, open architecture and hardware and software systems are key.

“We're obviously interested in how we move these standards forward. Standard management, no network lock-in, no security issues, LLM running natively on Xeon, but also Gaudi 2 and 3, Nvidia We can also work with other companies,” he says. Said. “Now is the time to build an open platform for enterprise AI. We are working to address critical requirements such as reliable reliability, availability, security, and support issues.”