This is a very strange rabbit hole.

shortage of supply

As AI companies begin to run out of training data, many are considering so-called “synthetic data,” but it remains unclear whether such things will actually work.

as new york times Synthetic data, at least on the surface, presents a simple solution to the lack of AI training data and other problems. What if AI could grow significantly based on the data generated? by In addition to solving the lack of training data, AI could also solve the pressing problem of AI piracy.

But while companies like Anthropic, Google, and OpenAI are all working on creating high-quality synthetic data, no one has yet achieved it.

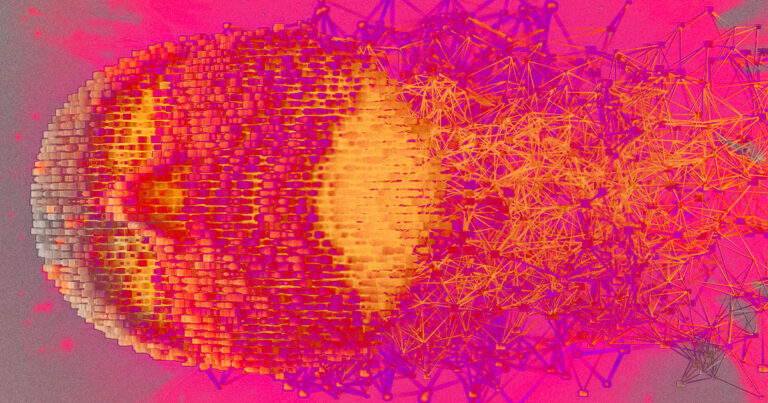

So far, AI models built on synthetic data have tended to run into problems. Australian AI researcher and podcaster Jaythan Sadowski has dubbed this problem “Habsburg AI.” This is a nod to the deep lineage of the Habsburg dynasty and its very prominent chin, which reflected the family's penchant for intermarriage.

as Sadowski tweeted last February., the term refers to “a system overtrained with the output of other generative AIs that results in inbred mutants with possibly exaggerated and grotesque features.” This is very similar to the Habsburg jaw.

last summer, futurism interviewed another data researcher, Richard G. Baraniuk of Rice University, about his term for this phenomenon: “model autophagy disorder,” or “MAD” for short. It took just five generations of AI inbreeding for Rice's model to “explode,” in his words.

synthetic solution

The big question is: Can AI companies find a way to create synthetic data that doesn't upset the system?

as new york times OpenAI and Anthropic (a company founded by former OpenAI employees who wanted to create a more ethical AI, among other things) describe themselves as experimenting with a kind of checks-and-balances system. The first model generates the data, and the second model checks the accuracy of the data.

So far, Anthropic has been the most outspoken about its use of synthetic data, noting that it uses a list of “configurations” or guidelines to train its 2-model system, and that the latest version of LLM, Claude 3, ” was trained inInternally generated data. ”

Although this is a promising concept, synthetic data research to date is spot on. Given that researchers do not Really Even if you know how AI works in the first place, it's hard to imagine AI understanding synthetic data out of the box.

Learn more about the AI challenge: The person controlling OpenAI's $175 million in funds appears to be a fake