As AI-generated content proliferates online and clutters social media feeds, images that evoke the uncanny valley effect – surreal scenes such as extra fingers or gibberish words in relatively ordinary scenes – are becoming increasingly common. You may have noticed that more images include details.

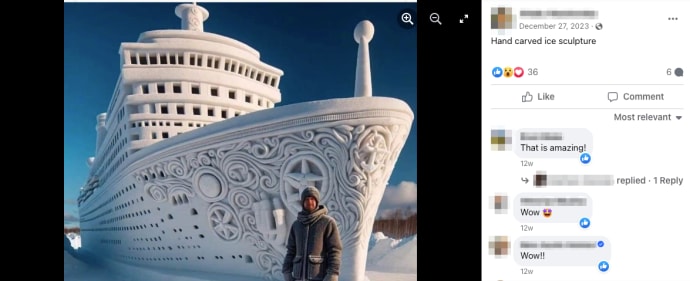

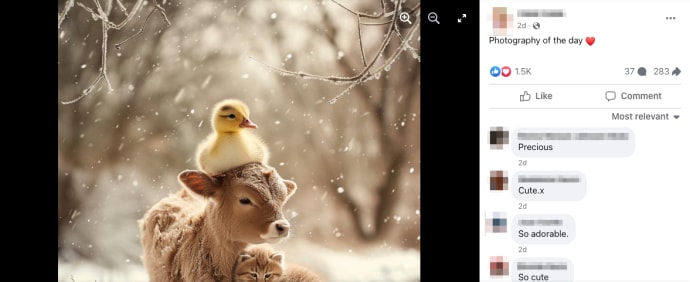

Among these misleading posts, young users found some clearly fake images (e.g. ski dog and toddler, a mysterious “hand-carved” ice sculpture and a giant crocheted cat). But art created by AI is not obvious to everyone. Older users, who generally belong to Generation X and above, falling This is because these visuals are posted all at once on social media. That's not proven by a quick look at his TikTok videos or his mom's girlfriend's Facebook activity, there's data behind it.

As younger users migrate to flashier apps like TikTok and Instagram, the platform is becoming increasingly popular among older adults to find entertainment and companionship. Recently, Facebook's algorithms have been pushing outlandish AI images into users' feeds to sell products and attract followers, according to a preprint paper published March 18 by researchers at Stanford University and Georgetown University. It seems that there is.

If you take a look at the comments section of these AI-generated photos, you'll see older users commenting on them as “beautiful” and “amazing” and often adorning these posts with heart and prayer emojis. You can see. Why do older people not only engage with these pages, but also seem to enjoy them?

At the moment, scientists do not have a definitive answer about the psychological effects of AI art. Generators such as DALL-E, Midjourney, and Adobe Firefly run on artificial intelligence models trained on up to millions of images, but they only became available to the public 20 years later. It's for a reason. For the past two years.

But experts have a hunch, and the explanation isn't as simple as you might expect. Still, knowing why your older friend or relative is confused can provide tips to prevent them from falling victim to scams and harmful misinformation.

Why AI images fool the elderly

Cracking this code is especially important because tech companies like Google tend to overlook older users during internal testing, acknowledges the University of Toronto, which studies the effects of aging on communication. neuroscientist Björn Hellmann told The Daily Beast.

“In these kinds of spaces, things are often done without any consideration of the aging perspective,” he says.

While cognitive decline may seem like a reasonable explanation for this machine-induced discrepancy, early research suggests that lack of experience and familiarity with AI may be contributing to the difference between younger and older viewers. It has been suggested that this may help explain gaps in comprehension. For example, in an August 2023 AARP/NORC survey of approximately 1,300 U.S. adults age 50 and older, only 17% of participants said they had read or heard “a lot” about AI. was.

So far, some experiments analyzing AI recognition in older adults seem to be consistent with the Facebook phenomenon.In a study published last month in the journal scientific reportIn , scientists showed 201 participants a combination of AI and human-generated images and evaluated their responses based on factors such as age, gender, and attitudes toward technology. The researchers found that older participants were more likely to believe that an AI-generated image was created by a human.

“I don't think anyone has ever found this before, but we don't know exactly how to interpret it,” study author Simone Grassini, a psychologist at the University of Bergen in Norway, told The Daily Beast.

Although there is a lack of overall research on people's perceptions of AI-generated content, researchers found similar results for AI-generated audio. Last year, University of Toronto professor Björn Hellmann reported that older subjects were less able to distinguish between human-generated and AI-generated speech than younger subjects.

The purpose of the experiment was to determine whether AI voice could be used to study how older adults perceive sounds in background noise, so it was “really unexpected.” he said.

whole, Grassini believes that AI-generated media of all kinds, including audio, video, and still images, could more easily fool older audiences through a broader “blanket effect.”both Harman and Grassini say older generations may not learn about the characteristics of AI-generated content and may encounter it less often in their daily lives, leaving them more vulnerable when that content appears on their screens. Suggests. Although cognitive decline and hearing loss (for speech) may be at play, Grassini still observed effects in people in their late 40s and early 50s.

Additionally, young people have grown up in an age of online misinformation and are accustomed to manipulated photos and videos, Grassini added. “We live in a society where there are more and more fakes.”

How to help friends and relatives find your bot

With so much fake content online, it can be difficult to spot it, but older people generally have a clearer picture. In fact, they may be more generally aware of the dangers of AI-powered content than younger generations.

A 2023 MITER-Harris poll of more than 2,000 people found that a higher percentage of baby boomer and Gen X participants were worried about the impact of deepfakes compared to Gen Z and Millennial participants It was shown that Among older respondents, a higher percentage of participants called for regulation of AI technology and more investment from the high-tech industry to protect the public.

Research has also demonstrated that older people may be able to distinguish between false headlines and articles more accurately than younger people, or at least at a similar rate. Older people also tend to consume more news than younger generations and may have accumulated more knowledge about certain subjects over their lifetime, making them harder to fool.

“Context of content is very important,” Andrea Hickerson, dean of the University of Mississippi School of Journalism and New Media, who has researched cyberattacks on older adults and deepfake detection, told The Daily Beast. “If it's a subject that anyone, senior or not, might know a lot about, it gives them the ability to view that information.”

Either way, scammers are targeting older adults with increasingly sophisticated generative AI tools. Deepfake audio and images sourced from social media can be used to pretend to be a grandchild calling from jail asking for bail money, or to disguise the appearance of a relative on a video call.

Fake videos, audio and images could also influence older voters ahead of the election. This could be particularly harmful, as people over 50 tend to make up the majority of the overall U.S. electorate.

To help the elderly in our lives, Hickerson said it's important to spread awareness about generative AI and the risks it can create online. Start by educating yourself on the tell-tale signs of a fake image, such as textures that are too smooth, teeth that look weird, or perfectly repeating patterns in the background.

She added that it can clarify what we know and don't know about social media algorithms and how to target older adults. It's also helpful to clarify that misinformation can come from friends and loved ones. “We need to convince people that it's okay to trust Aunt Betty and not Aunt Betty's content,” she says.

no one is safe

But as deepfakes and other AI creations become more sophisticated by the day, it can be difficult for even the most knowledgeable tech experts to tell them apart. Even if you think you're particularly knowledgeable, these models can already be puzzling. The website This People Does Not Exist offers horrifying and convincing photos of fake AI faces that don't necessarily bear subtle traces of their computer origins.

Researchers and tech companies have created algorithms to automatically detect fake media, but they cannot work with 100% accuracy. Rapidly evolving generative AI models will eventually outperform them. (Midjourney, for example, struggled for a long time to create realistic hands until it got smarter. With a new version released last spring. )

Regulation and corporate accountability are key to countering the growing wave of invisible fake content and its social impact, Hickerson said. As of last week, more than 50 bills had been introduced in 30 states aimed at cracking down on the risks of deepfakes. And since the beginning of 2024, Congress has introduced a flurry of bills to address deepfakes.

“It's really about regulation and liability,” Hickerson said. “We need to talk about it more publicly, including all generations.”