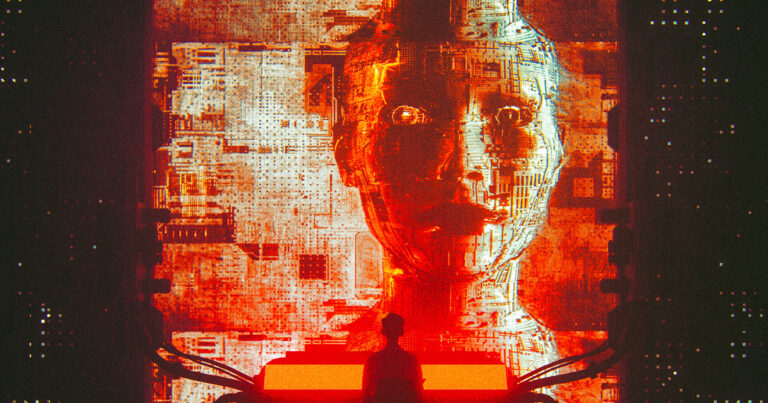

“Trust is the currency of the AI era.”

turn the page

AI is not as popular with the world's population as its boosters believe.

as Axios Eighteen months have passed since the so-called “AI revolution” that began with OpenAI's ChatGPT release in November 2022, according to a new poll of 32,000 respondents worldwide conducted by consulting firm Edelman. In a short period of time, the public's trust is already being lost.

“Trust is the currency of the AI era, but currently our innovation account is dangerously overdrawn,” said Justin Westcott, global technology chair at the company. Axios. “Companies need to look beyond the mechanics of AI and address its true cost and value: the why and for whom.”

Despite Silicon Valley's claims that AI can be trusted, recent polls (including, but not limited to, Edelman's) show that people are unsure whether AI is useful or not. Opinions are divided, indicating a decline in trust.

Globally, trust in AI has fallen from 61% in 2019 to just 53%, according to an Edelman poll. Job insecurity is on the rise in the US, with countless people saying they have lost or expect to lose their jobs to AI, but even fewer say they currently trust AI. Five years ago, 50 people said they trusted AI, compared to just 35% of people.

The drop in the percentage since 2019 is not that surprising. Before 2022, AI was more like science fiction or a quiet worldview within organizations than an actual reality. With the advent of ChatGPT, the distant fears that AI would take our jobs or perhaps enslave us all suddenly became more real, and everyone had more hands-on time with the technology.

management style

Perhaps the biggest takeaway from the company's Trust Barometer is that people around the world believe that past AI innovations have been “poorly managed,” as CEO Richard Edelman said in a statement. On average, they believe it by a 2-to-1 margin. poll.

Furthermore, while a whopping 76% of people trust the technology industry in general, only half trust AI, and tech giants like Microsoft and Meta are investing heavily in AI. As this continues, this gap definitely requires further investigation.

Overall, Edelman respondents said they expect scientists to be informed about the safety of AI, providing an opportunity for the research community to step up as an authority on the subject. I showed it.

“Those who prioritize responsible AI, partner transparently with communities and governments, and put control back in the hands of users will not only lead the industry, but rebuild the bridges of trust that technology has lost along the way.” We will,” Wescott said. Axios.

Learn more about trust in AI: Scientists have a dirty secret: No one knows how AI actually works