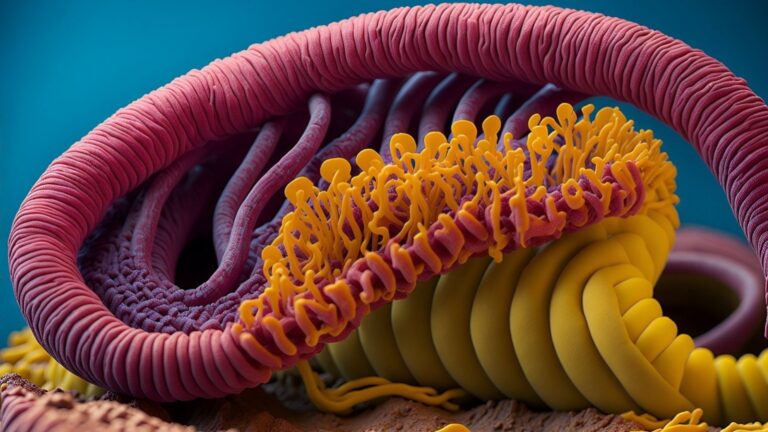

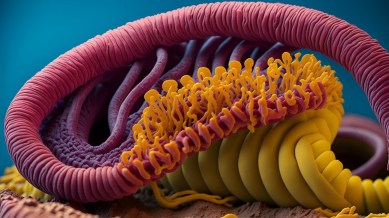

Researchers have created a new AI worm called Morris II that can infect popular AI models such as ChatGPT and Gemini and steal information such as credit card information from AI-powered email assistants.

A group of researchers has created a new AI worm called “Morris II” that can use a variety of methods to steal sensitive data, send spam emails, and spread malware. The research paper, named after the first worm to shake up the Internet in 1988, suggests that this generative AI worm could spread among artificial intelligence systems.

Morris II can influence generative AI email assistants, extract data from AI-enabled email assistants, and even remove security measures in popular AI-powered chatbots like ChatGPT and Gemini. AI worms can easily move through AI systems without being detected using self-replicating prompts.

you are exhausted

Monthly free episode limit.

Read more stories for free

using your Express account.

Subscribe now with a special 15% discount Use code: ELECTION15

This premium article is currently free.

Sign up to read more free stories, access offers from our partners, and more.

Subscribe now with a special 15% discount Use code: ELECTION15

This content is exclusive to subscribers.

Subscribe today and get unlimited access to Premium articles only at The Indian Express.

Ben Nassi of Cornell Tech, Stav Cohen of Israel Institute of Technology, and Ron Button of Intuit use a large language model in which text prompts use additional data to infect email assistants, and that data is transferred to GPT. -4 or says it will be sent to Gemini Pro to create the text. Content can defeat the safeguards of generative AI services and data can be stolen.

It has also been suggested that the image prompt technique could embed harmful prompts in photos, allowing email assistants to automatically forward messages and infect new email clients. Morris II could be used to mine sensitive information such as social security numbers and credit card information.

The researchers immediately alerted both OpenAI and Google to their findings. According to recent reports, wired, Google declined to respond, but an OpenAI spokesperson said it is committed to making its systems more secure and developers must use methods to ensure they are not working with harmful input. Ta.

© IE Online Media Services Pvt Ltd

Date first uploaded: March 3, 2024 11:15 IST