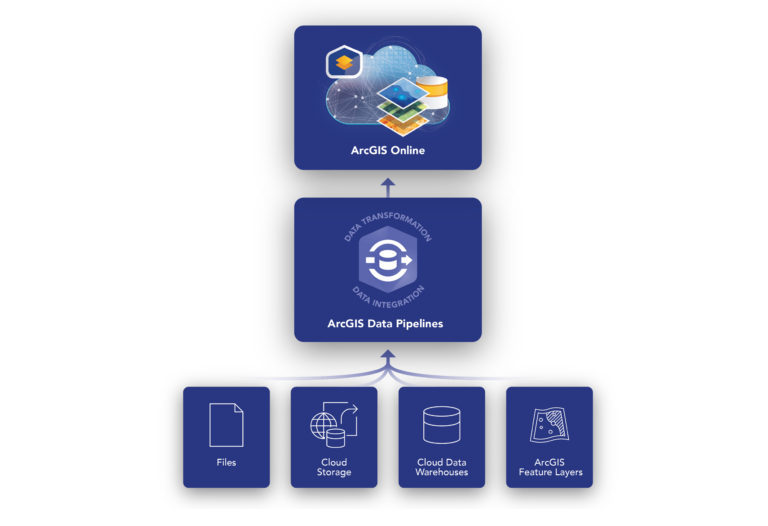

Effective geospatial analysis relies heavily on accessing, integrating, and preparing data from a variety of sources. This can be a time-consuming and tedious task, and becomes even more complex if the source data is updated regularly. ArcGIS Data Pipelines provides a comprehensive solution to this challenge. We're excited to announce that it's officially out of beta and now generally available on ArcGIS Online.

As a native data integration feature, Data Pipelines provides a fast and efficient way to ingest, engineer, and maintain data from a variety of locations, including multiple cloud-based data stores. A drag-and-drop interface minimizes the need for coding skills and simplifies the process of data integration and preparation. Additionally, scheduling features automate data refreshes to keep your data fresh as your source data evolves.

This blog describes enhancements and new tools in recent releases and how they streamline your data integration workflows. Read on to learn how Data Pipelines can unlock the full potential of your geospatial data.

What's new in ArcGIS Data Pipelines (February 2024)

In the February 2024 update, Data Pipelines added all the features known from the beta., more! For more information about the beta release of Data Pipelines, see the Introducing Data Pipelines in ArcGIS Online (beta release) and What's New in Data Pipelines (October 2023) blog posts.

New features in this update include support for JSON files, new data engineering tools, and new experiences and dialogs for your applications. To learn more, check out the video demo and details below.

Guided tour of the data pipeline editor

Get started with Data Pipelines by taking a quick guided tour of the editor. This tour will help you learn the key concepts and features of data pipelines before you start building data integration workflows. The tour will automatically appear when you log in for the first time. Then you can access it anytime from the editor's toolbar.

Support for JSON files and complex fields

Data Pipelines now supports JSON files from Amazon S3, Microsoft Azure Storage, and public URL inputs. Note that JSON files cannot be used as file inputs, as direct upload to content is not yet supported.

There are also new tools and enhancements for working with complex field types such as arrays, maps, and struct fields commonly found in JSON, GeoJSON, and parquet files. These field types contain lists and dictionaries of information that are difficult to work with without the appropriate tools. The following new tools and enhancements have been added to help you make better use of the information stored in these field types.

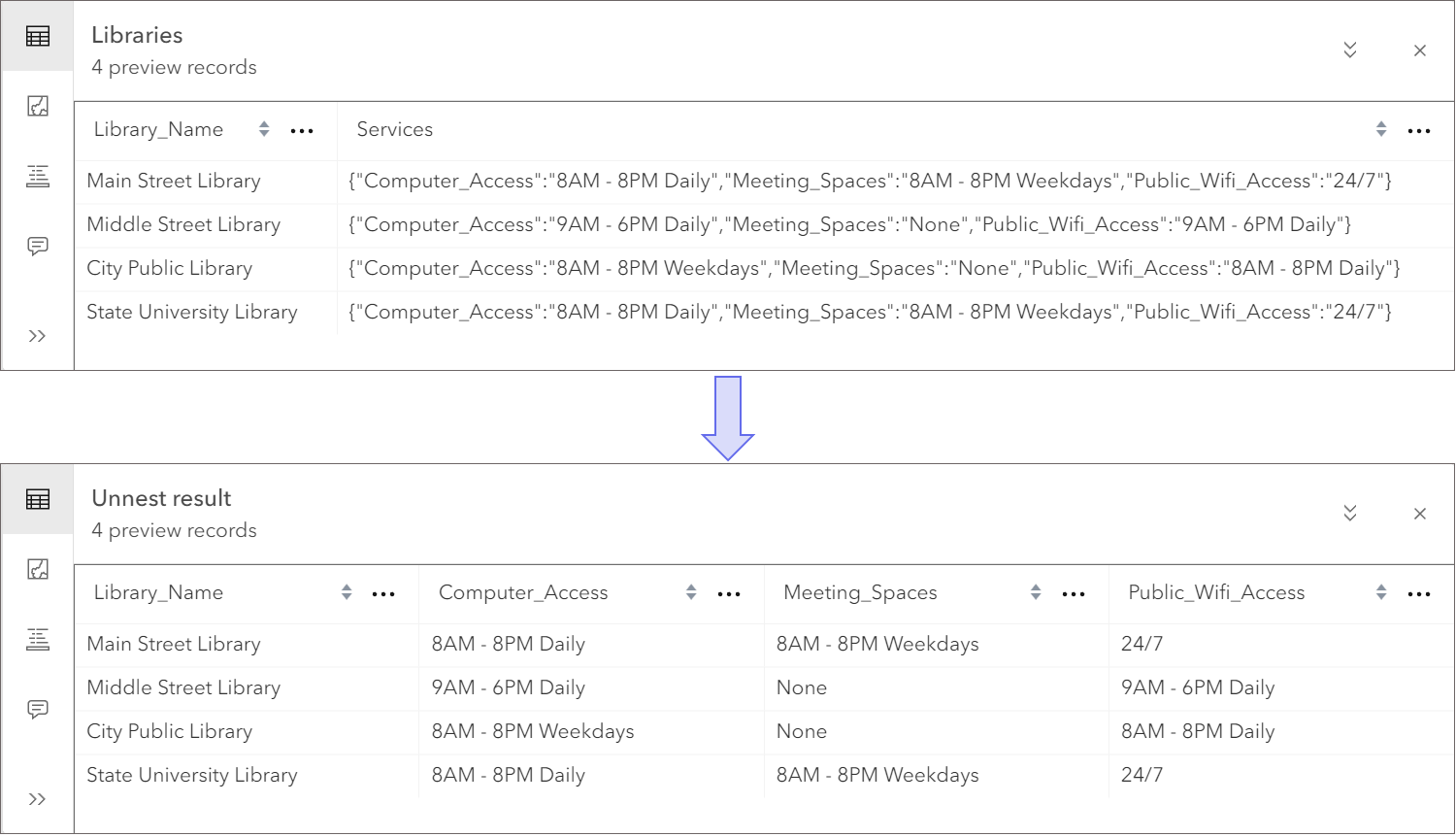

- The new Unnest Fields tool extracts fields and values from array, map, and struct fields. For example, suppose you have a dataset representing the locations of libraries within a community. Each library record has a structure field value that contains a dictionary of all services offered by the library and the business hours associated with each service. Use the Unnest Field tool to return a new field containing business hours for each service. The conversion from dictionary to new fields and values looks like this:

- The Arcade editor used with the Field Calculator now supports selecting array, map, and struct field types from the profile variables list. Using the example above, you can extract just the computer's access information using an Arcade expression like this:

$record.Services["Computer_Access"].

- Field selection has been enhanced as well as the field calculator. You can now browse through the fields of your struct type and select only the values you want to keep. Again, using the sample data in the image above:

Public_Wifi_AccessDrill down into the field andServicesSelect a field using the field picker.

map field tools

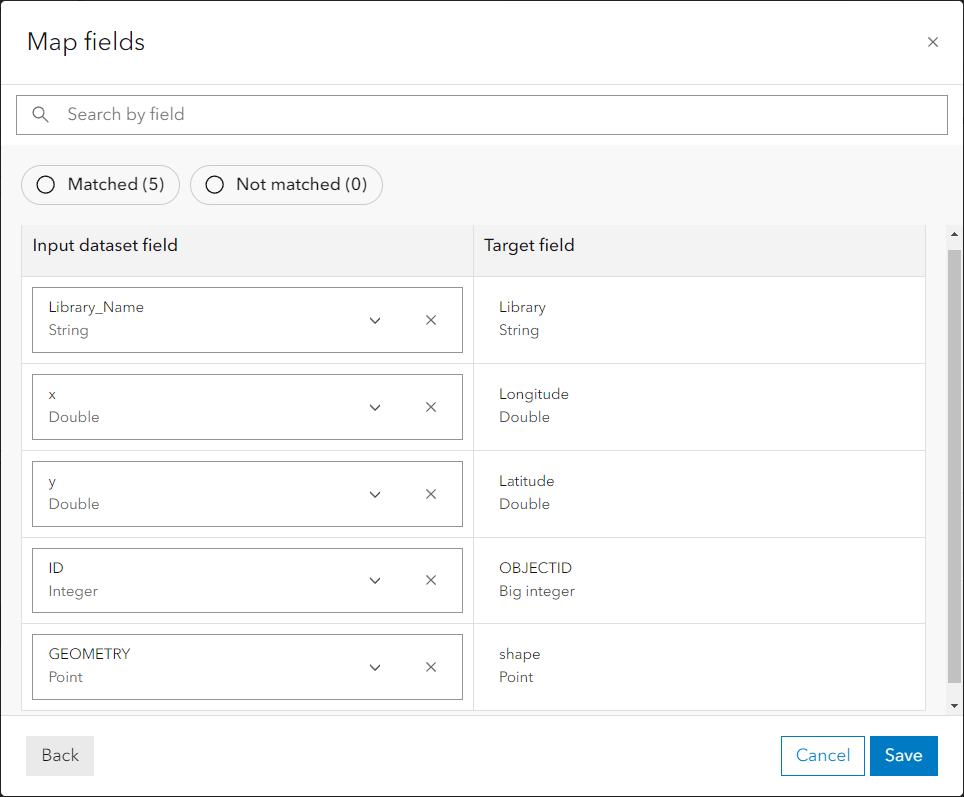

You can now efficiently update and standardize your dataset schemas using the new Map Fields tool. For example, map fields are often used to prepare data for use with merge tools, or to match fields to feature layers that are updated using output feature layer tools. See the screenshot below for an example of how to map input dataset fields to target fields.

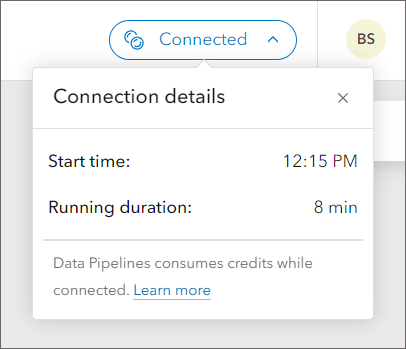

Connection details dialog

Another new feature is[接続の詳細]It's a dialogue. Use this dialog to see when the connection was started and how long it has been active. The Details link in the dialog provides more information, including how the connection works and how credits are consumed while using the data pipeline.

For more information

we want to hear from you! More features will be added to ArcGIS Data Pipelines in the future. We'd love to hear your thoughts on what new features and enhancements we can add to help your data preparation workflow. Share your ideas and ask questions in the Data Pipelines community.

For more information about new enhancements and improvements in the February update, please see the What's New document. To learn more about Data Pipelines' powerful data integration capabilities, check out the Introducing Data Pipelines in ArcGIS Online (Beta Release) blog post, What's New in Data Pipelines (October 2023) blog post, and learn more about the product. See documentation.